Google released the strongest human brain “map” in history

- Normal Liver Cells Found to Promote Cancer Metastasis to the Liver

- Nearly 80% Complete Remission: Breakthrough in ADC Anti-Tumor Treatment

- Vaccination Against Common Diseases May Prevent Dementia!

- New Alzheimer’s Disease (AD) Diagnosis and Staging Criteria

- Breakthrough in Alzheimer’s Disease: New Nasal Spray Halts Cognitive Decline by Targeting Toxic Protein

- Can the Tap Water at the Paris Olympics be Drunk Directly?

Google released the strongest human brain “map” in history, online visual 3D neuron “forest”

Google released the strongest human brain “map” in history. In 2019, Google successfully reconstructed a 3D model of Drosophila brain neurons for the first time. In 2020, Google announced the fruit fly “half-brain” connection group.

A few days ago, Google released the H01 human brain imaging data set, 130 million synapses, tens of thousands of neurons, the largest sample in history!

Synapses are the “bridges” of neural networks.

We know that the human brain has 86 billion neurons. Because of synapses, the electrical signals on the neuron can be transmitted to the next neuron.

For a long time, scientists have dreamed of mapping the structure of a complete brain neural network to understand how the nervous system works.

I wonder if you have seen the 3D cerebral cortex map that is automatically reconstructed with high resolution?

Recently, Google cooperated with the Harvard University Lichtman Laboratory to release the latest “H01” data set, which is a 1.4 PB rendering of a small sample of human brain tissue.

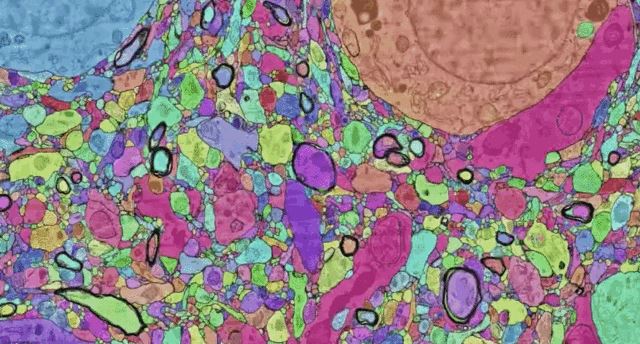

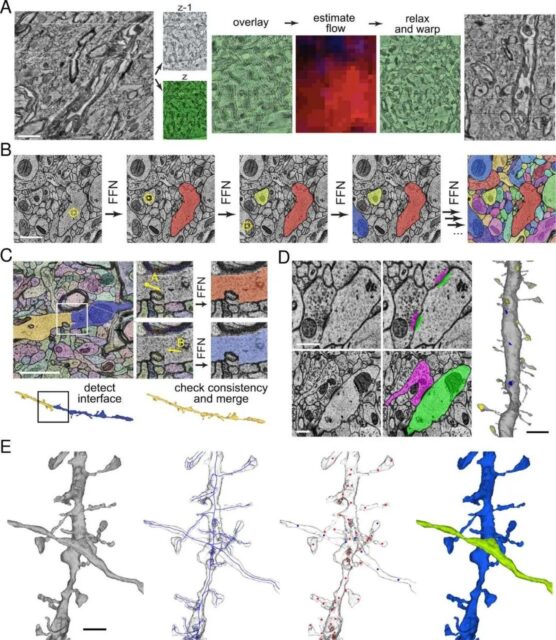

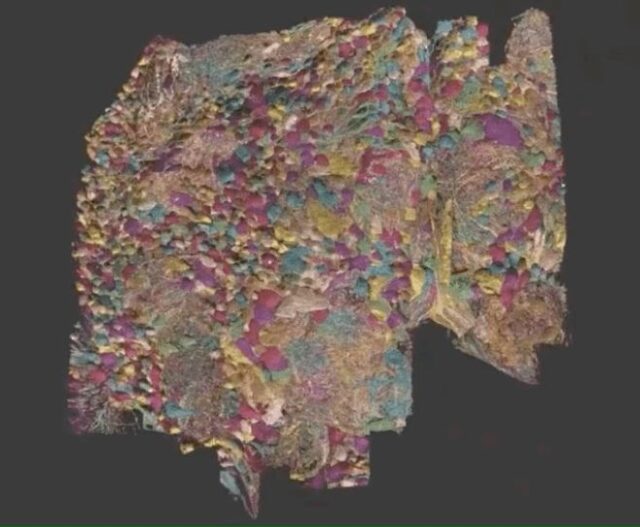

The H01 sample is imaged by a serial section electron microscope with a resolution of 4nm, and then reconstructed and annotated by automatic calculation technology. Finally, the preliminary human cerebral cortex structure can be seen.

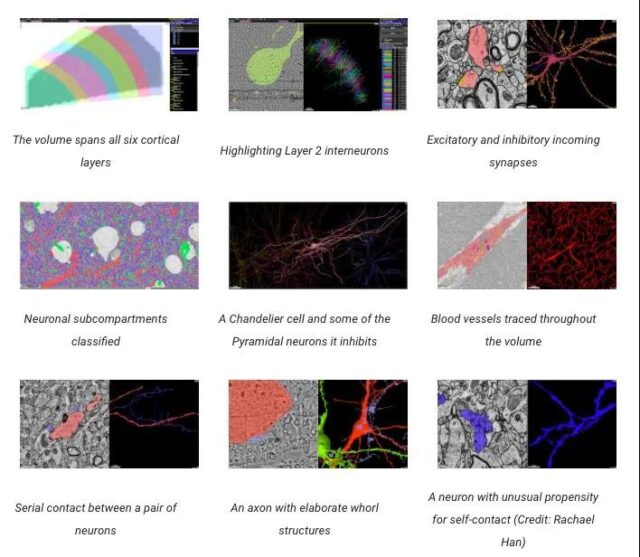

The data set includes cortical tissue covering approximately one cubic millimeter, with tens of thousands of neurons, several neural reconstruction cell segments, 130 million annotated synapses, 104 proofreading cells, and many other subcellular annotations and structures.

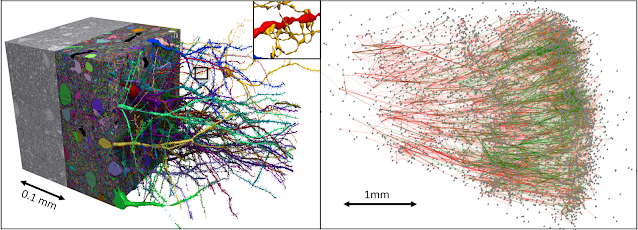

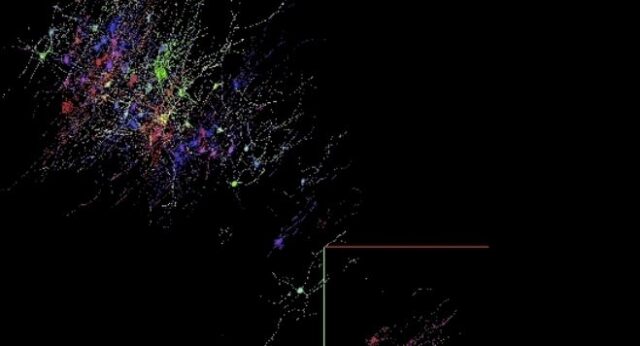

Left: Data Boy | Right: 5000 neurons in the data set, as well as subgraphs of excitability (green) and inhibitory (red) connections

All data can be accessed through Neuroglencer.

H01 is by far the “largest sample” of this degree of imaging and reconstruction of the cerebral cortex of all living things.

It is also the “first large-scale” sample to study the “synaptic connectivity” of the human cerebral cortex, which spans multiple cell types at all levels in the cerebral cortex.

The main goal of this project is to provide a new resource for studying the human brain, and to improve and expand the basic technology of connectomics.

At present, the latest results of this research are published on the preprint bioRxiv under the title: A connectomic study of a petascale fragment of human cerebral cortex.

“Map” of the cerebral cortex: 130 million synapses, tens of thousands of neurons

First, you must first understand the magical cerebral cortex.

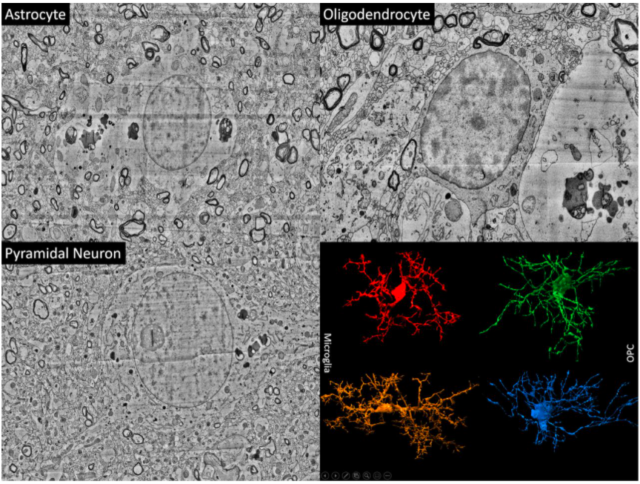

The cerebral cortex is the thin surface layer of the vertebrate brain. It is the latest and most functionally advanced part in the evolutionary history of the brain and the entire nervous system. It shows the “largest size difference” among different mammals (especially humans).

Each part of the cerebral cortex is divided into 6 layers, and each layer has different types of nerve cells (such as spiny stellate nerve cells). The cerebral cortex plays a key role in most “advanced cognitive functions” such as thinking, memory, planning, perception, language, and attention.

Although some progress has been made in understanding the macrostructure of this very complex organization, its structure at the level of individual nerve cells and the interconnected synapses are largely unknown.

Connectomics of the human brain: from surgical biopsy to 3D database

Drawing the structure of the brain based on the resolution of a single synapse requires high-resolution microscopy techniques, which can image biochemically stable (fixed) tissues.

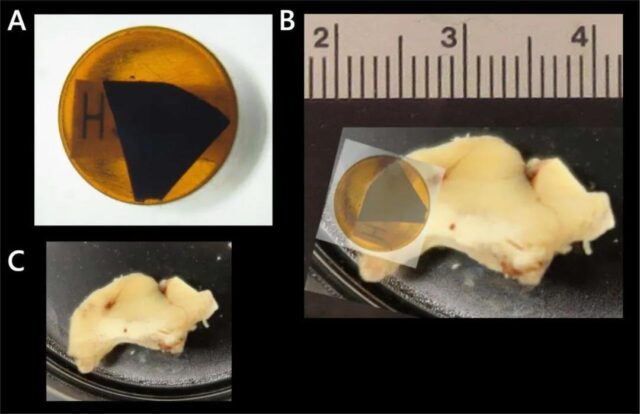

The research team worked with brain surgeons at the Massachusetts General Hospital. When they performed surgery to treat epilepsy, they sometimes removed part of the normal human cerebral cortex in order to get into the deep brain where epilepsy is currently onset.

The removed tissue is usually discarded, and the research team received an anonymous donation from the patient for research by colleagues in Lichtman’s laboratory.

Researchers at Harvard University used automated tape to collect the ultramicrotome, cut the tissue into approximately 5,300 30-nanometer slices, placed these slices on a silicon wafer, and then measured 4 nanometers under a custom 61-beam parallel scanning electron microscope. The resolution of the brain tissue is imaged, and the image can be obtained quickly.

5300 physical slices were imaged, resulting in 225 million independent two-dimensional images.

Then, the research team used calculations to stitch and align these data to produce a single 3D volume.

Although the quality of the data is generally good, these alignment channels must be robust in order to cope with some challenges, including imaging artifacts, missing parts, changes in microscope parameters, and physical stretching and compression of tissues.

Once aligned, a multi-scale flood-filling network (FNN) pipeline using thousands of Google Cloud TPUs will be applied to generate a 3D segmentation of each individual cell in the tissue. FFN is the first automatic segmentation technique that can produce sufficiently accurate reconstructions.

Other machine learning pipelines are used to identify and describe “130 million synapses”, divide each 3D segment into different “subregions” (such as axons, dendrites, or cell bodies), and identify other structures of interest , Such as myelin and cilia.

The result of automatic reconstruction is not perfect, so it is necessary to manually “proofread” about 100 cells in the data.

Over time, the research team hopes to add additional cells to this validated collection through additional manual effort and further development of automation.

H01: Image capture of approximately 1 cubic millimeter of human brain tissue at 1.4 PB

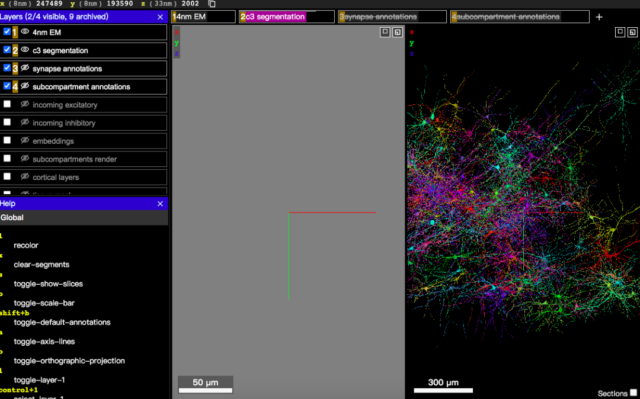

Neuroglancer: Visualization tool of the cerebral cortex

Image data, reconstruction results and annotations can be displayed through an interactive web-based 3D visualization interface called Neuroglancer, which was originally developed to visualize the brains of fruit flies.

Neuroglancer is an open source software, widely used in the field of connectomics.

In order to support the analysis of the H01 data set, some new features have been introduced, especially the search for specific neurons based on the type of the data set or other attributes.

Connect H01 and the annotated Neuroglancer interface. The user can select a specific cell according to the level and type of the cell, and can view the input and output synapses.

Following the largest Drosophila brain map and neuron 3D model

In 2019, Google, in collaboration with the Howard Hughes Medical Institute and the University of Cambridge, used the Flood-Filling Network algorithm and TPU chips to slice the Drosophila brain into thousands of ultra-thin sections of 40 nanometers, and used transmission electron microscopy to generate each A sliced image produces a Drosophila brain image of more than 40 trillion pixels, and then arranges and aligns the 2D images to form a complete 3D image of the Drosophila brain.

For the first time, a 3D model of Drosophila brain neurons was successfully reconstructed, but no information about the “connectivity” of Drosophila brain neurons was revealed.

Drosophila brain reconstruction under 40 trillion pixels

In 2020, Google released the largest and most detailed Drosophila brain map ever, a highly detailed mapping of the neuronal connections in the Drosophila brain.

At the beginning of last year, Google and the FlyEM team of the Howard Hughes Medical Institute (HHMI), etc., released the “hemibrain” connectome. The drawn image covers 25,000 neurons, which is large by volume. It accounts for about one-third of the brain of fruit flies.

In the current research, Google researchers still face technical difficulties.

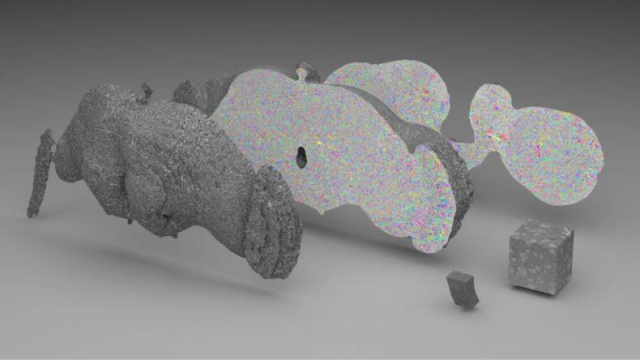

Because H01 is a PB-level data set, but only one millionth of the capacity of the entire human brain.

Mapping the synapse-level brain to the entire mouse brain (500 times larger than H01) still presents serious technical challenges, let alone the entire human brain.

One of the challenges is data storage: the rat’s brain can generate 1 exabyte of data, and the cost of storing this data is very high.

To solve this problem, Google researchers used machine learning-based denoising strategies to compress data by at least 17 times.

image

In the future, the sheer size of data sets will require researchers to develop new strategies to organize and access the rich information inherent in connected data. This will be the direction that Google researchers will still work towards in the future.

(source:internet, reference only)

Disclaimer of medicaltrend.org

Important Note: The information provided is for informational purposes only and should not be considered as medical advice.